Harsh Reality: Part 4 – Bridging the Loudness Gap

So I’m going to try and wrap this series up once and for all. I’m not going to say I have all the answers in this, but I will get into some stuff that has been working for me.

First a little recap. The topic at hand is really mix translation. It’s my feeling that as technology continues to advance, there is a growing need for our mixes to translate beyond the rooms where they originate; FOH engineers are more and more becoming simultaneous FOH and studio engineers. The latest thing these days is the webcast, but it is almost a guarantee that there will be something else in the future.

Mix translation creates a couple of challenges for us, though. For starters we have a dynamics issue because the nature of our programming can be spread across anywhere from 20 to 40 dB. Let’s just look at some hypothetical SPL levels. We have spoken word preaching averaging in the 60’s up to the low/mid 70’s. Then there’s our video content which we run a little louder around movie theater levels averaging from the mid-70’s into the 80’s. And then there’s music which will vary depending on the church, but for us it could be anywhere from the upper 80’s to even tagging 100 depending on style or the event.

The way I see it, there are typically three ranges of loudness to contend with on a Sunday that somehow feel very natural in the room, but at the end of the day we’re talking about a potential 40 dB range of volume. Now, I might be a little old school in that I like dynamics in audio when I listen to things at home, but 40 dB is pretty severe. If you want to see for yourself, put something on at home at a comfortable level, and then turn it down 40 dB. It’s probably not so comfortable anymore. Try it the other direction if you dare….

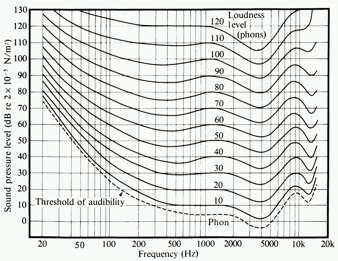

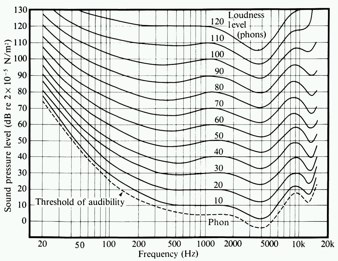

So part 1 of our translation issue is dealing with this loudness gap to simply bring the levels of our programming closer together–preferably WITHOUT the use of heavy or extreme compression–for the mixes to translate outside our rooms. Of course, leveling things up brings another challenge thanks to our friend, Equal Loudness, which will affect our tonal hearing perception when we listen back to programming at a different volume than it was mixed at.

Let’s start with Part 1 of this: moving the mix from the BIG room to a smaller room. This is something that started at FOH as a work around to bypass some internal latency issues on the console and turned into something I ended up implementing in the studio as well.

A few years ago I basically separated all of our programming into four categories: live music, spoken word, playback, and audience/ambience. I figured every feed we output from the console is going to need some combination of these categories. For example, the PA needs everything but the audience. The video control room needs all 4. Our message CD recorders really only need spoken word and maybe a bit of audience. Another way to look at this is each category is basically a stem mix that gets assigned to its own bus in the console. In my FOH world I use the LR bus for live music and then a group for playback and a group spoken word; at FOH the audience mics are on their own since there aren’t many, but in the studio they get a bus.

This can be done on other consoles utilizing sub-groups and/or aux sends. In some settings aux sends might be the way to go for some of this since the room size might dictate that your music mix won’t be properly balanced outside the room. If you’re in a little room with live drums and guitar amps, you might not have a lot of those elements in your room mix due to the spill coming from the stage. Using a couple aux sends for music could give you the ability to do a custom mix for outside the room.

When it comes to leveling the programming material together I use the PQ section on the VENUE which are basically stereo matrices. Custom feeds/mixes are created by adding the needed busses to a PQ, and then the levels are adjusted so that the average level of the overall mix is balanced. A little bit of compression is sometimes used on the PQ to help glue it all together and help with week-to-week variations in level. Pretty simple.

Outside of the VENUE a console with a matrix section is going to be a big help in making this work, but there are also some ways to do things without it. If you’re bussing with aux sends, you can feed those aux sends out and bring them back in on open channels if you don’t have a matrix section. Then you can level those individual channels and send them to a group or another aux send to get them out of the console. You can also send your stems to a smaller mixer for leveling things out if you are running out of resources on your primary console. It might not be a painless procedure because you might have to sack some of your auxes and inputs, but you can do this without a high end console if you think creatively with your busses.

Of course we still have another issue to deal with: our friend Equal Loudness. So one question that maybe is still hanging out there is how big of a deal is this whole Equal Loudness phenomenon when it comes to mix translation? I think the best way to examine this is to actually listen to what happens. I’m embedding a couple of samples below to demonstrate(RSS readers will probably need to visit this post on the web to listen).

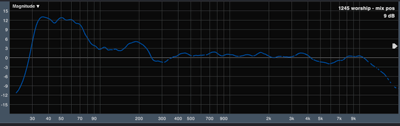

First a couple notes. The photo at left is a transfer function of the PA on the day this was mixed. As you can see it’s pretty linear above 200 Hz which means the PA isn’t coloring our room mix much. You should also note that the SPL level was in the mid- to upper-90’s dBA. Remember, the effect of Equal Loudness on mix translation is going to vary depending on the difference in volume between the mix environment and the outside listening environment.

The first sample below is the clean LR mix. This is what was going to the PA.

[audio:FM-demo/bless-clean.mp3]

The second sample is what feeds our CD burners for rehearsal CD’s our musicians and production crew use for reference. This sample features my work-in-progress equal loudness compensation EQ. The compensation curve was based on examining the difference in the Fletcher Munson curves at our live mix volume and my studio mix volume which is in the 79-82 dB ballpark at unity. I’m basically attempting to compensate for a perception difference about 15-20 dB below the original mix level.

[audio:FM-demo/bless-fm.mp3]

I’ve listened to these clips on a variety of what I would consider average “home” setups, and each experience was different. On some playback systems the difference was subtle and seemed somewhat insignificant. On other systems the first sample made me a little uncomfortable mainly because it just didn’t feel like a good representation of what was happening in the room. Now, I’m not going for a perfect translation because I think it will always be a challenge to pull a mix out of the vibe and energy of being in the room, but the first sample just feels flatter to me than what I remember the room experience to be. There is almost a cloudiness to it. I watched a little bit of the John Mayer at Red Rocks webcast on UStream last week, and my guess is it was a pretty straight board mix because it had some of that same quality.

The second sample, however, always played clearer to me. The first thing that stuck out to me was the snare sounds much closer to my memory of it in the room. The second sample, in some ways, feels more intentional to me, but here’s another way I look at it. When I hear the first sample, I don’t really want to play it for anybody. The second sample, on the other hand, is something I wouldn’t mind playing for friends, family, and neighbors who don’t go to church. If I was inviting folks to visit us on the web during a service, I’d rather they get the second sample than the first.

Here are two more samples. Again, the first one is the PA feed and the second one is the CD burner feed w/ EQ compensation.

[audio:FM-demo/bless3-clean.mp3]

[audio:FM-demo/bless3-fm.mp3]

Again, the second sample seems clearer to me and sounds much closer to the original feel of the house. Whether or not you feel like the compensation is truly necessary, I hope you can at least hear how Equal Loudness can affect mix translation when you mix at one volume and listen back at another.

On the practical application side of this, setting up an EQ to make this compensation isn’t necessarily a simple thing. This is because I only want my EQ compensation on the live music and don’t want it on any of our other programming; in my world the loudness gap on our other programing isn’t big enough to necessitate doing compensation. The trick I’ve landed on right now is to return my music mix to a stereo channel on the console. In VENUE world I have an EQ plugin that takes the LR Bus for an input and simply returns to a stereo channel. This could also be done by hardwiring a bus output to an input channel on just about any console.

Is the setup a little clunky? Perhaps. But it works and once it’s set up I don’t have to think about it. Plus I think future consoles are going to be better equipped to do this kind of processing. Another advantage to doing it this way in VENUE world is with my music bus sitting on an input channel, I can easily delay it back to my audience mics–thanks goes to Robert Scovill for that trick….

Now, if you’re with me on the Equal Loudness stuff, how much of it is actually necessary for mix translation is going to vary from church to church due to the wide variety of volumes Sunday services can range. But at any rate I hope this might give you some ideas and things to think about if you’re looking at moving into broadcasting or webcasting or even just improving your existing feeds. And remember, even if you don’t need to export your mix, there can still be advantages to figuring out how to create mixes that translate. Just think about the benefits of being able to evaluate things outside your room. Think about what kind of a confidence booster it can be for musicians to get a rehearsal CD that sounds good in their car. Creating mixes that translate can open up a new realm of possibilities.

The song above is called Bless Your Name and featured on North Point’s latest release:

Previous Post

Previous Post Next Post

Next Post

Hi Dave,

How is your equal loudness EQ coming along? I’m playing with some board mixes from a recent event at our church, and have noticed the same thing. Your EQ’d tracks sound much better. Would you be able to share the compensation EQ you are using?

Justin.

I haven’t done much with it because I haven’t mixed in the room in a few weeks. I’ve found it’s best for me to only tweak it when it’s my mix.

Unfortunately, it’s not something I’m ready to share specifics on at this point. Part of the issue is I think this is something that probably needs to be tuned on a case-by-case basis since so much of it depends on PA optimization and SPL levels. Plus there are sort of 2 sides to it. The first is sort of a scientific thing where you can look at the differences in the equal loudness curves and fashion an EQ to compensate for that. Then there’s an artistic side of things where you can tailor things for preference.

For example, there are some things I think we–meaning me and the people I work with–like to hear in the room especially in the low-mids that we don’t usually get with a broadcast or commercial CD mix. I want to keep that stuff in the room mix, but take it out of everything else.

Right now I’m just sort of bouncing things down and dumping them into Pro Tools where I’m playing with an EQ in a familiar environment like my office or the studio.